Buffer overflow (also known as a buffer overrun) is a computer programming error in which an application attempts to store data in a memory buffer, but the buffer is too small to accommodate the data. This causes the memory buffer to overflow, allowing data which is not intended to be stored to be written and corrupt the previously stored information, often leading to a crash.

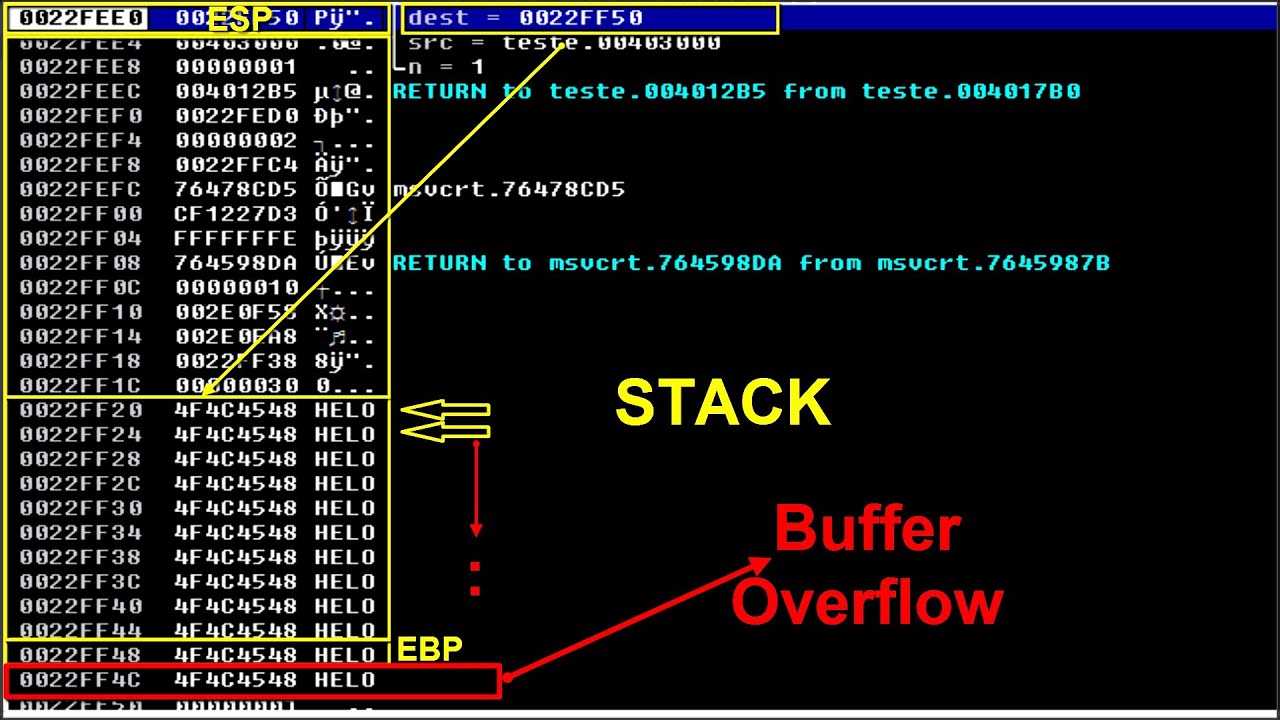

Buffer overflows can occur in the stack, the heap, or a memory-mapped register, and they can be caused by incorrectly written application code or interactions between application code and the underlying operating system. In the case of stack buffer overflows, they are one of the most common types of security vulnerabilities.

Buffer overflows occur when a software program attempts to store more data in the memory buffer than it can handle. This can cause data corruption, which can lead to application crashes or even system outages. The consequences of a buffer overflow depend on the particular system and how the overflow is exploited. For instance, a buffer overflow can be used to inject malicious code into the system, allowing an attacker to gain complete control of the system.

Buffer overflows can be prevented through proper programming techniques. These techniques include using secure programming frameworks, including memory boundary checks and input validation, which can help detect and prevent buffer overflow attacks. Additionally, modern programming languages such as Java and C# provide built-in security mechanisms which help prevent buffer overflows.

In conclusion, buffer overflows are a serious security vulnerability which can lead to system outages, data corruption, and malicious code execution. Proper programming techniques and security mechanisms can help identify and prevent buffer overflows and their associated security risks.