Collinearity in regression analysis is the phenomenon in which two or more predictor variables in a multiple regression model are highly correlated, meaning that one can be linearly predicted from the others with a significant degree of accuracy. This can be caused by various factors, including data collection or encoding errors, redundant features, spurious relationships, or natural clustering of observations.

When two variables in a regression model are highly correlated, they are said to be collinear. This can lead to a severe decrease in the model’s predictive performance and cause the estimated standard errors to be larger than they would otherwise be. As a result, highly collinear predictor variables can mislead a regression model and lead to erroneous conclusions.

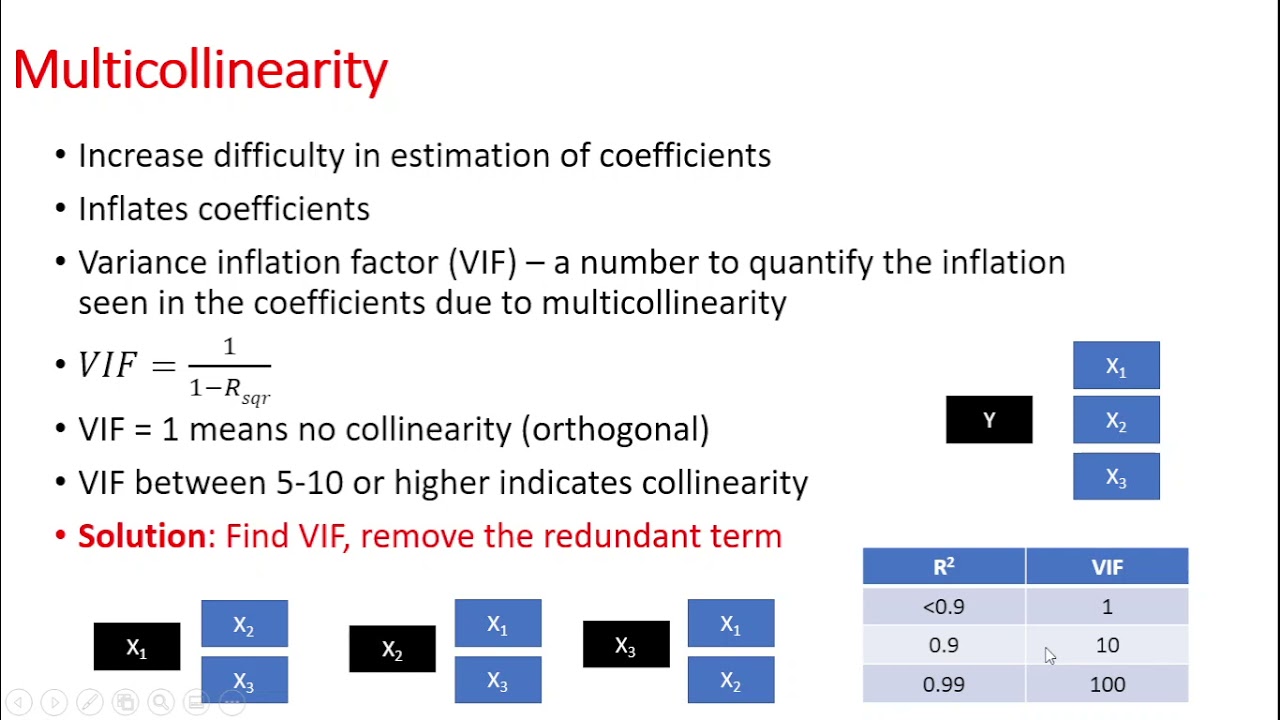

Many techniques are used to detect and reduce the effects of collinearity in regression models. These include computing the pairwise correlation coefficients and examining the variance inflation factors (VIF) for each variable. If any of these measures exceed the recommended limits, then there is evidence of collinearity and the model should be modified accordingly.

In summary, collinearity in regression analysis is an important topic that requires attention. The presence of collinearity in the model can lead to poor predictive performance and misleading results and, therefore, must be detected and adjusted for in order to obtain reliable conclusions.