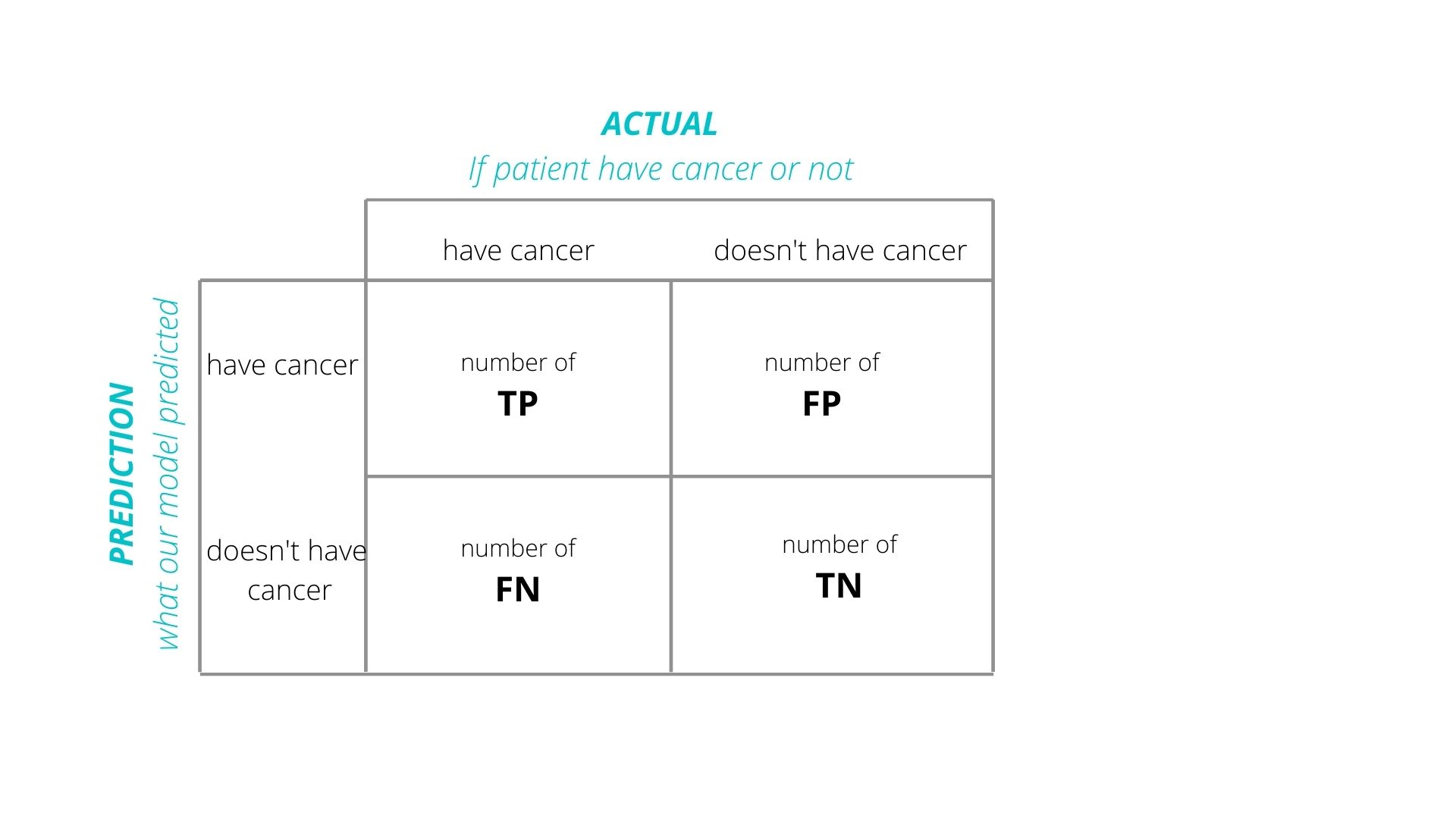

A confusion matrix is a tool used in numerical machine learning classifications for evaluating the performance of a classification model. It is a table used to evaluate the accuracy of a classification algorithm. Each row of the matrix is a predicted class and each column is an actual class. The matrix consists of four different metrics: true positives (TP), true negatives (TN), false positives (FP), and false negatives (FN).

A confusion matrix is a useful tool in analyzing the effectiveness of a predictive model. It is used to determine the number of errors made by the model in predicting or classifying data points, and to compare two classification methods for the same dataset. The matrix can also be used to assess the accuracy of a model’s predictions.

The accuracy of a model is calculated by summing the total number of true positives and true negatives, and dividing this sum by the total number of data points. The calculation of other metrics such as precision (the ratio of true positives to all samples classified as positive) and recall (the ration of true positives to all true positives) are also made possible using the confusion matrix.

The confusion matrix is a useful tool for analyzing the performance of different classifiers, as well as for comparing the performance of different classification techniques. It can help to identify overfitted models which are prone to overfitting and underspecification bias. It can also be used to assess the ability of a model to correctly classify unseen data and determine if more data needs to be used for further accuracy improvements.

Confusion matrices are used in a wide range of applications, including marketing, finance, medicine, engineering, statistics and machine learning. They are particularly popular in machine learning, since they provide a visual representation of the performance of different classifiers on a given dataset.