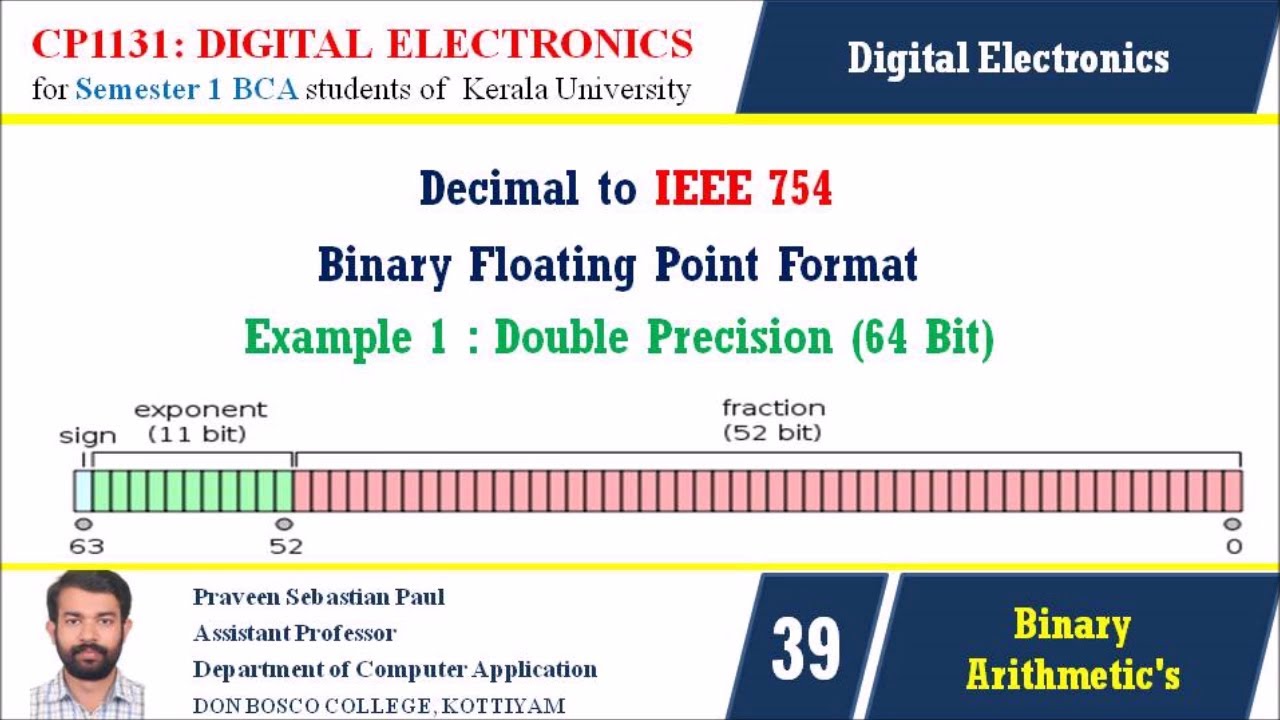

Double-precision floating-point format is a computer number format that occupies 8 bytes (64 bits) in memory and represents a wide range of values by using a floating point. It is a standard format for storing numerical data in computers and it is generally referred to as double format.

The double format can represent numbers between -1.79769313486231570814527423731704356798070567525844996598917476803157260780028538760589558632766878171540458953514382464234321326889464182768467546703537516986049910576551282076245490090389328944075868508455133942304583236903222948165808559332123348274797826204144723168738177180919299881250404026184124858368.00000000000000

The double format essentially uses two single-precision floating-point numbers, each occupying 4 bytes (32 bits). The two numbers are stored in memory using the IEEE 754 standard, which requires the most significant bit of each part (called the sign bit) be set to zero, allowing for the representation of both positive and negative numbers.

The double-precision format not only preserves a greater range of numbers than the single-precision format, but also preserves more precision. Double-precision numbers generally have a precision of approximately 16 decimal digits, nearly twice as many as those of single-precision numbers. As a result, double-precision is the standard choice when greater precision is required.

Double-precision format is now the most commonly used type of floating-point format, supported by nearly all modern CPUs and GPUs. It is also the ubiquitous format for exchange of numerical data between programs, and is commonly encountered in programming language libraries, mathematics functions, and numerical libraries.