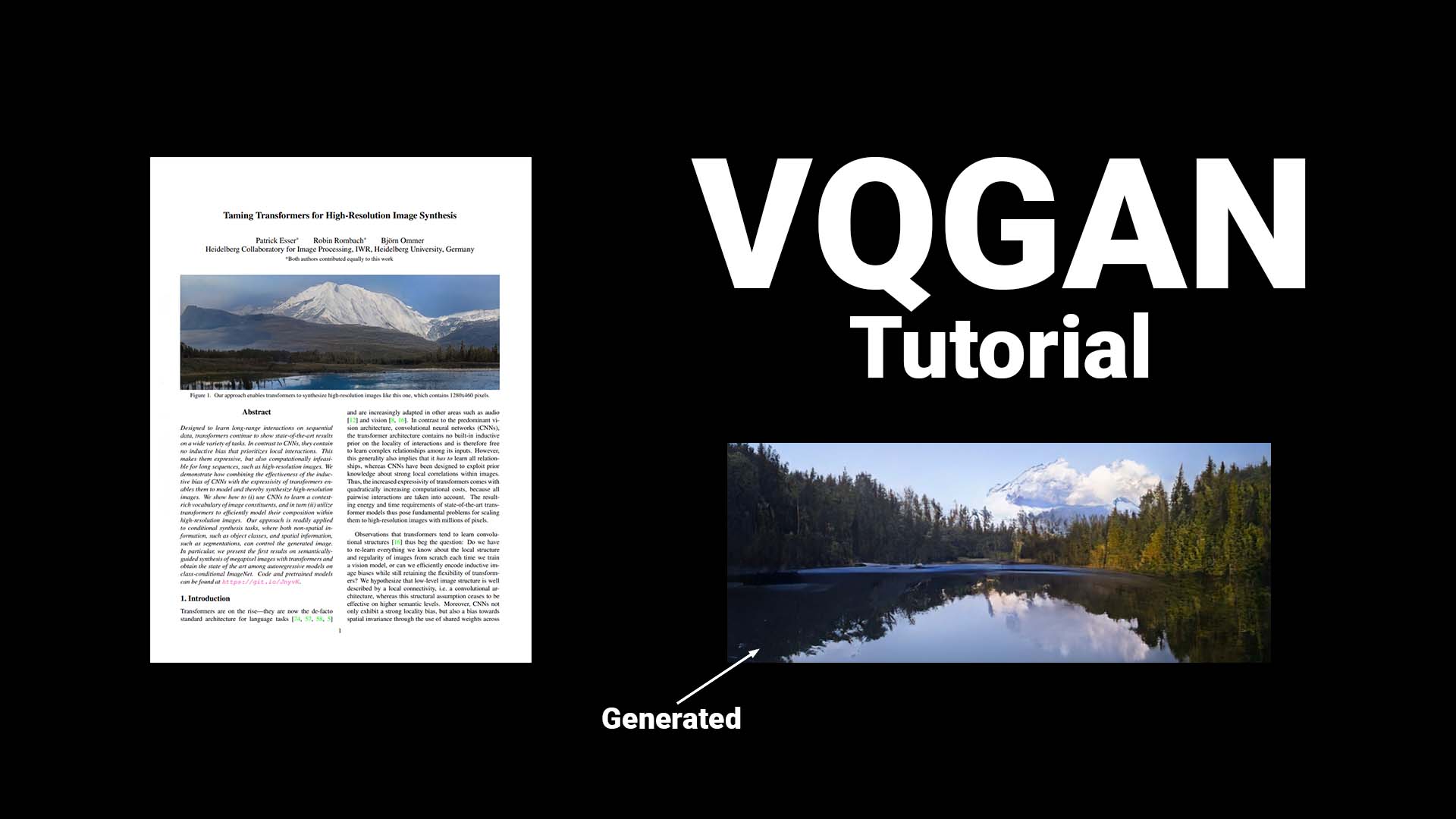

Vector Quantized Generative Adversarial Network (VQGAN) is a type of artificial intelligence (AI) model which uses advances in deep learning to generate high-quality images. VQGAN was developed by researchers at Google Brain as an extension of Generative Adversarial Networks (GANs), and is based on a combination of two different deep learning algorithms: Variational Autoencoders (VAEs), and Vector Quantization (VQ).

VAEs are used to encode the original images into a smaller representation, which is then compressed using the VQ algorithm. VQ models have been proposed as a way to reduce the size of images without sacrificing their quality, and is especially useful for image processing tasks. VQGAN combines the strengths of both VAEs and VQs in the design of a GAN whose generative architecture is capable of producing more realistic images.

VQGAN leverages several key components of the GAN architecture. Such as adversarial training, where in the generator model uses a discriminator model’s feedback and feedback from data sets to improve the quality of the generated images. The VQGAN model is also able to view data in multiple scales and by leveraging learned representations, it is able to achieve resolution or fine details while also identifying global patterns.

The practical applications of VQGAN range from image manipulation and synthesis to medical imaging, video editing, and industrial design. Due to its ability to generate high-quality, realistic images, VQGAN has earned a reputation as one of the most powerful GAN architectures and is frequently used by researchers in the field of AI.