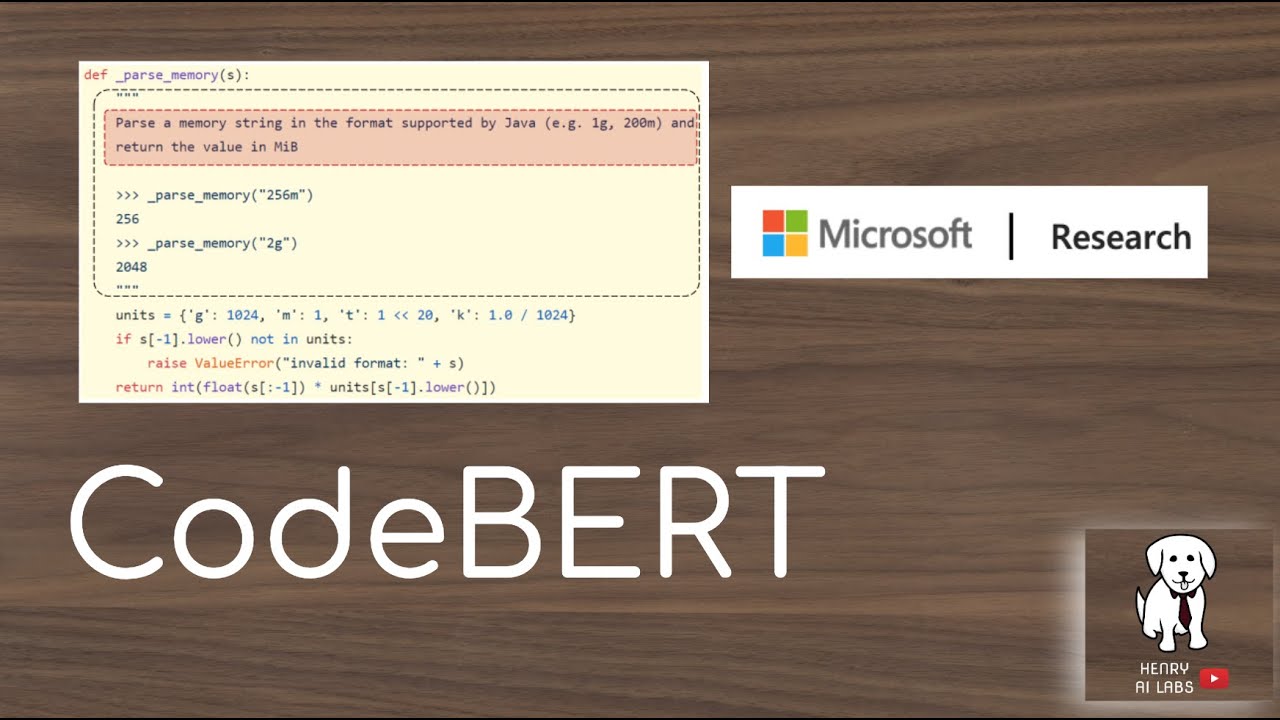

CodeBERT (Computerized Binarized Embedded Representation for Text) is an open source natural language processing (NLP) model developed by Microsoft Research. It was designed for natural language understanding (NLU) tasks such as sentiment analysis, text classification, passage retrieval, and text generation, all using text as input. CodeBERT is based on the BERT model which uses a bidirectional approach to produce language embeddings.

CodeBERT is a Transformer-based model and uses the pre-trained, multi-layer bidirectional Transformer based on the Base Google Research BERT. CodeBERT differentiates from BERT in that it can perform a range of tasks using a single network. CodeBERT is also able to recognize both long-range relationship in a sentence as well as syntax and can learn from the grammar of a language, enabling it to comprehend many structures in a sentence.

CodeBERT has recently shown impressive performance on a range of NLU tasks, such as sentiment analysis, text classification, passage retrieval, and text generation. For example, CodeBERT achieved a near-perfect score on the Stanford Natural Language Inference (SNLI) dataset.

CodeBERT also has potential advantages in other tasks, such as question answering and conversational systems. As part of the Microsoft Research team, CodeBERT is being evaluated with a number of real world tasks, such as customer service bots, question answering systems, and more.

To sum up, CodeBERT is an open source natural language processing (NLP) model that uses a bidirectional approach to produce language embeddings. CodeBERT has shown excellent performance on a range of NLU tasks and has potential applications in many different types of tasks.