Gaussian processes (GPs) are collections of random variables, usually indexed by a continuous or discrete source of data, that have a joint probability distribution which can be described by a multivariate normal distribution. While they are most commonly used in machine learning, they can also be used in a variety of other areas, such as Bayesian optimization, spatial statistics, and regression analysis.

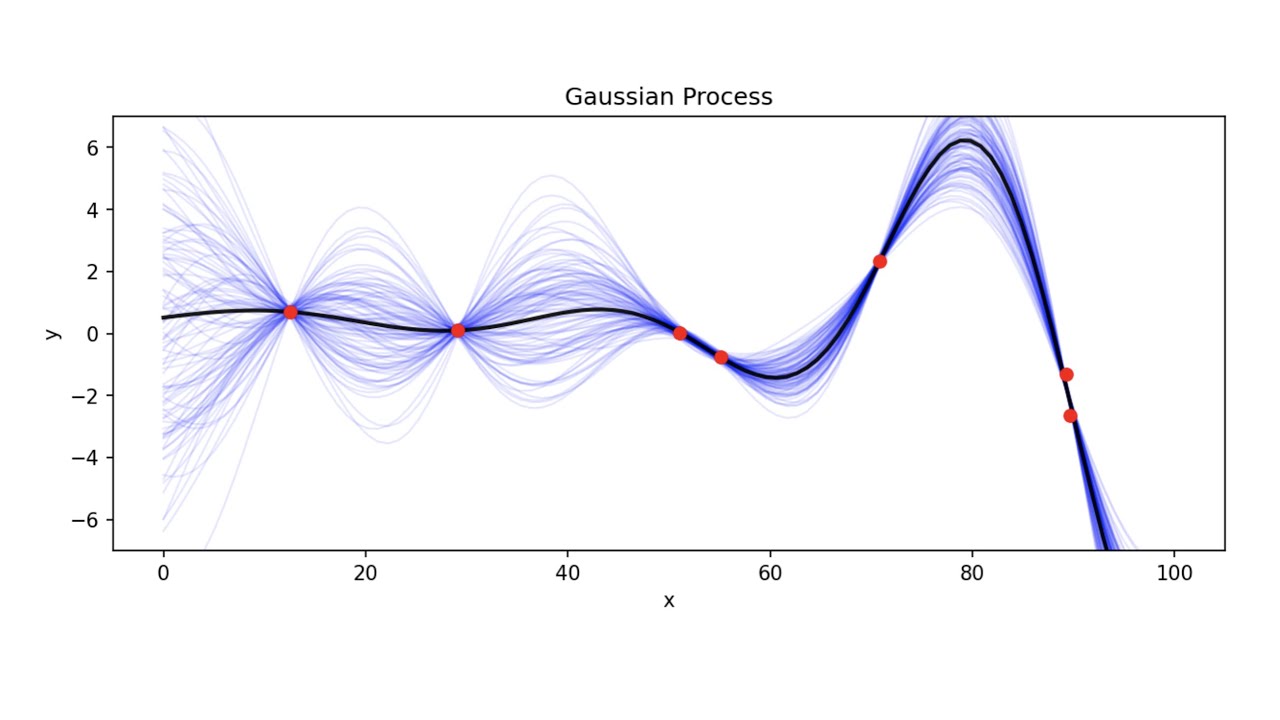

A Gaussian process is a way of representing a function with a probability distribution. It assumes that given a set of observed data, any new values are normally distributed with a mean and variance depending on the values of the observed data. This means that by considering observed data, it is possible to make predictions about certain values that have not yet been observed with some confidence. GPs are also non-parametric, meaning that they do not make any assumptions about the underlying data but instead assume a prior distribution.

GPs have a number of applications, such as regression analysis and prediction problems, which require making future predictions from prior knowledge or observations. GPs are useful in these scenarios because they allow for uncertainty in the results, giving a probability distribution of possible future results rather than just a single prediction. They can also be used for Bayesian optimization, which is a technique to optimize parameters in a model. GPs can also be used in spatial statistics, where they are used to model spatial relationships between variables.

Gaussian processes are an important tool in machine learning, as they provide a way to model complex functions without needing to make assumptions about the form of the data. This means they can be used in scenarios where the exact form of the data is unknown or difficult to specify. GPs are also theoretically appealing as they offer an elegant way to combine prior knowledge with observed data when making predictions and decisions.