N-grams are a type of data structure used in computational linguistics and natural language processing (NLP). They are groups of words that occur together in specific occurrences; for example, a two-word N-gram would be a pair of words such as “red apple”. They are used to measure the frequency of word or phrase patterns in a given corpus.

N-grams are used in various areas of computational linguistics such as language modeling, spelling correction, and text mining. The most common application of N-grams in computational linguistics is to find patterns and relationships in large corpora of text. For instance, they can be used to detect plagiarism, find topic-sensitive words, and to build language models.

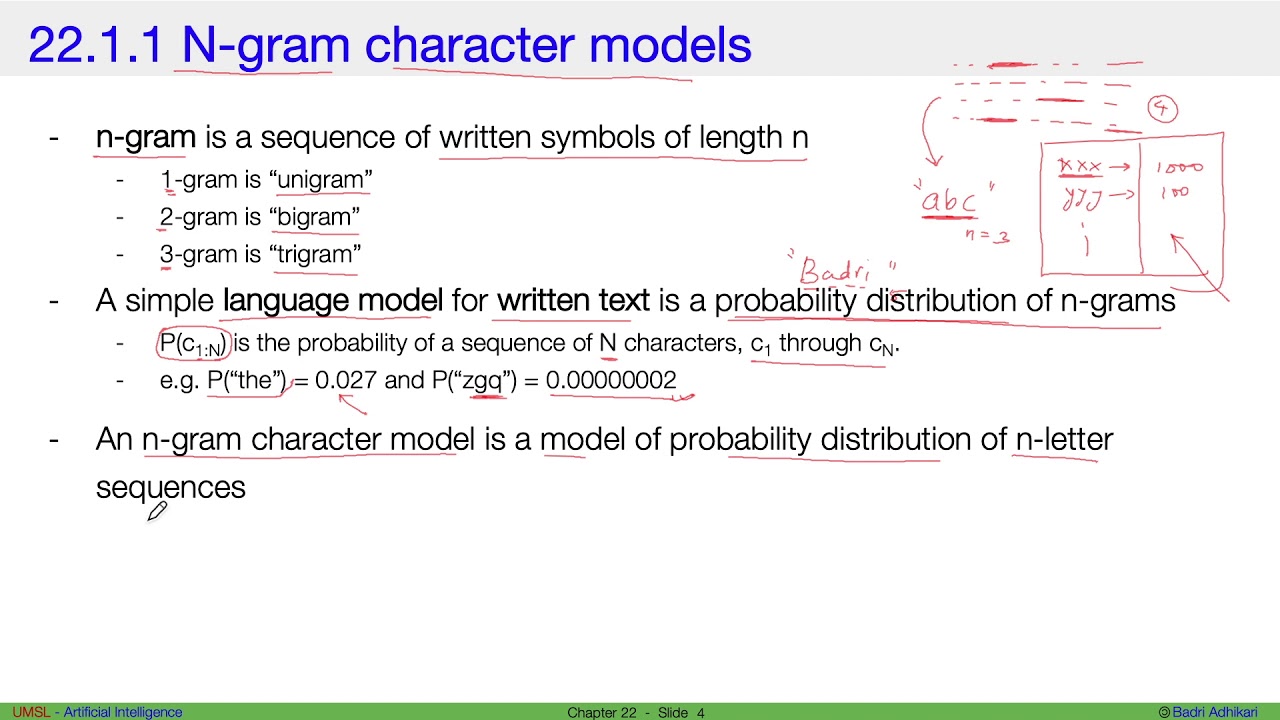

In language modeling, N-grams are used to build a model of how words are likely to appear in given contexts. This includes the probability of a word appearing after a certain preceding word, known as an “N-gram likelihood”. The goal of language modeling is to improve the accuracy of understanding a given language, by using a single N-gram model rather than a more complicated statistical model.

In text mining, N-grams are used to determine statistical properties of a corpus. They can be used to measure which words are most used in a corpus, how often certain words appear, and to detect the sentiment of a text.

Overall, N-grams are a powerful tool in computational linguistics and natural language processing (NLP), used to explore textual data, build language models and more.